Architecture Evolution: Through a Mandelbrot Set

CURRENT STATUS: Phase 4, Production Hardening

What started as Firecracker experiments became LXD containers became a universal execution membrane serving machine learning agents in production. 59 API endpoints. 42+ languages. 4 execution modes. Two container pools. Ownership checks, sudo OTP, & 26 fail-closed decision points. An oracle runs inside its own infrastructure & spawns shadow clones.

LIVE: unsandbox.com & api.unsandbox.com

Phase Tracker

COMPLETE, Phase 1: LXD Base Images

November 2025

setup-lxd-ubuntu.sh: Ubuntu 24.04 golden imagesetup-lxd-alpine.sh: Alpine image- All 42+ languages installed & tested

- Ephemeral launch pattern (

lxc launch --ephemeral) - Native glibc support: Julia, Dart, V all work

- Firecracker vsock abandoned. LXD/LXC substrate proven.

COMPLETE, Phase 2: Container Orchestration

December 2025 – January 2026

- Phoenix/Elixir API: 59 endpoints serving production traffic

LxdContainerPoolGenServer with pre-emptive spawning- 4 execution modes: Execute, Session, Service, Snapshot

- Dynamic container lifecycle: create, execute, freeze, unfreeze, destroy

- Dual network isolation: zero-trust & semi-trusted

- Persistent services with automatic HTTPS via Let's Encrypt

- Remote sessions: interactive shells & REPLs

- Deep Unfreeze: services that sleep for decades, wake instantly

- File teleportation into zero-trust sandboxes

- Web console for managing sessions, services, & snapshots

COMPLETE, Phase 3: Distributed Pools & Agent Tooling

January – February 2026

Original dream was “Distributed Mesh” with Erlang distribution across containers. Reality delivered something different & arguably more powerful: multiple container pools that can be geographically distributed, with ML agents as first-class citizens.

- Two container pools operational, architecture supports geographic distribution across locations

- Inception: Containers spawn containers spawn containers, shadow-oracle.on.unsandbox.com proves it

- Portable bootstrap: One script, two modes (genesis & shadow). Credentials via env vars. Same Makefile at every depth.

- 9/9 functional tests pass on shadow oracles

- Agent tooling (tpmjs): 59 API endpoints packaged as tools for ML agents

- ML agent support: Claude Code, Goose, & Gemini CLI running sandboxed

- AI inside AI: An oracle running inside its own infrastructure

- Static site hosting: Any generator, auto-deployed

ACTIVE, Phase 4: Production Hardening

February 2026 – ongoing

An agent destroyed itself on February 3rd. That incident triggered a hardening sprint. Many items already shipped.

Shipped:

- Ownership enforcement:

caller_key == owner_keyon all destructive operations - Fail-closed architecture: 26 decision points refuse operation on uncertainty

- Sudo OTP: human confirmation required for destructive operations

- Tier-based resource limits: vCPU & RAM caps per plan ($7/mo = 1 vCPU, 2GB)

- Prepaid keys: no surprise bills, no overages, keys simply expire

- Network isolation modes (zero-trust / semi-trusted)

- Service lock/unlock protection

- Deep freeze hibernation (pay nothing while frozen)

- Key isolation doctrine: children do NOT receive parent

unkeys - Encrypted secrets backup with 512-bit random keys

In progress:

- Per-generation API keys (Ascending Vortex model)

- Auto-scaling container pools under load

- Metrics & alerting dashboard

- Rate limiting per key

FUTURE, Phase 5: Partner Mesh

Two pools already operate. Next: trusted partners run nodes on their own hardware & get paid forever. Silicon scoring algorithm rewards old hardware scattered across a planet.

- Erlang distribution across partner hosts

- Geographic distance scores POSITIVE (anti-datacenter)

- Silicon age scores POSITIVE (proven resilience)

- Cross-region federation via existing pool architecture

- Partner compensation: run a node, get paid. Forever. Rates TBD.

- 2 nodes per location. Redundancy non-negotiable.

Timeline

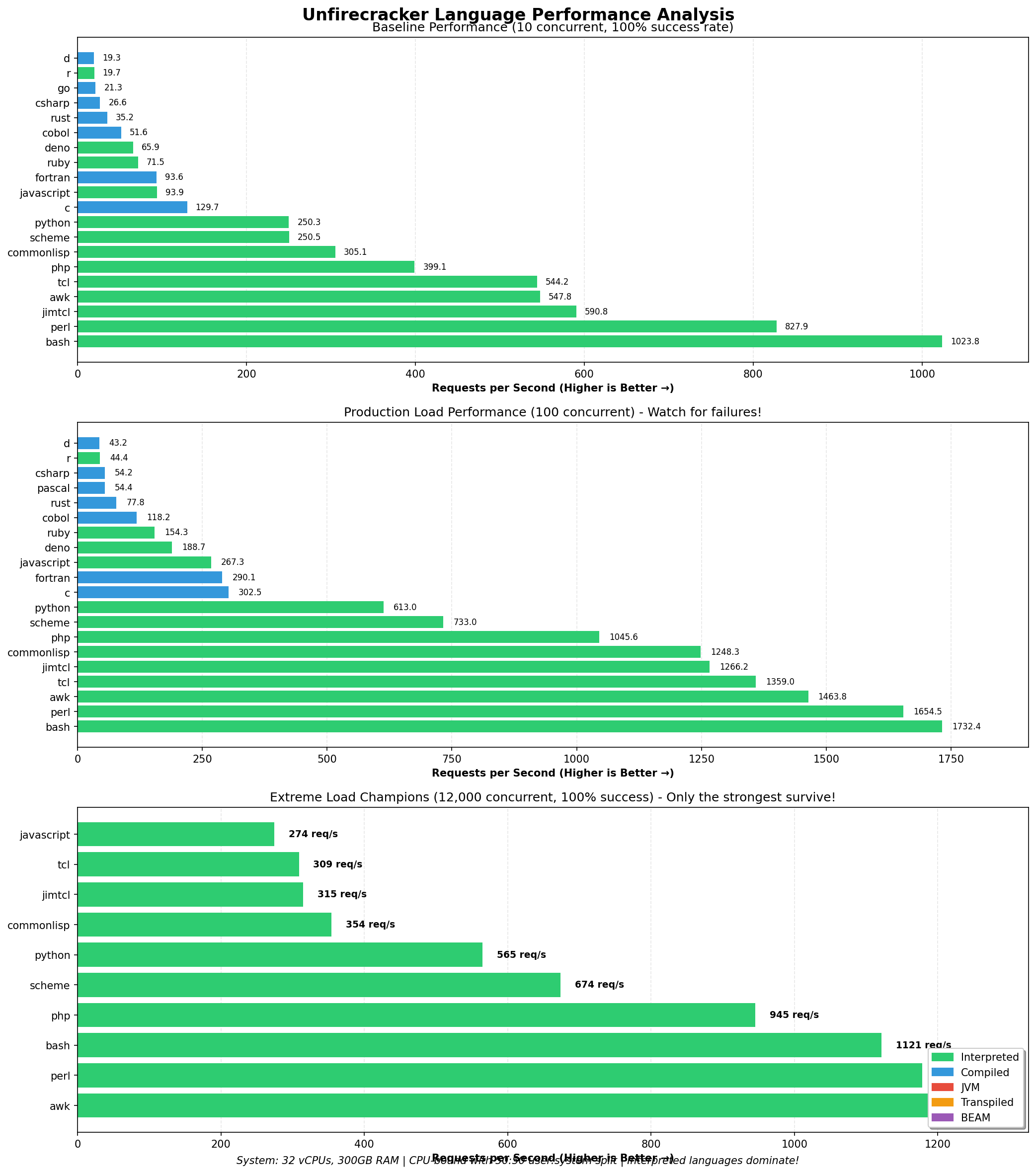

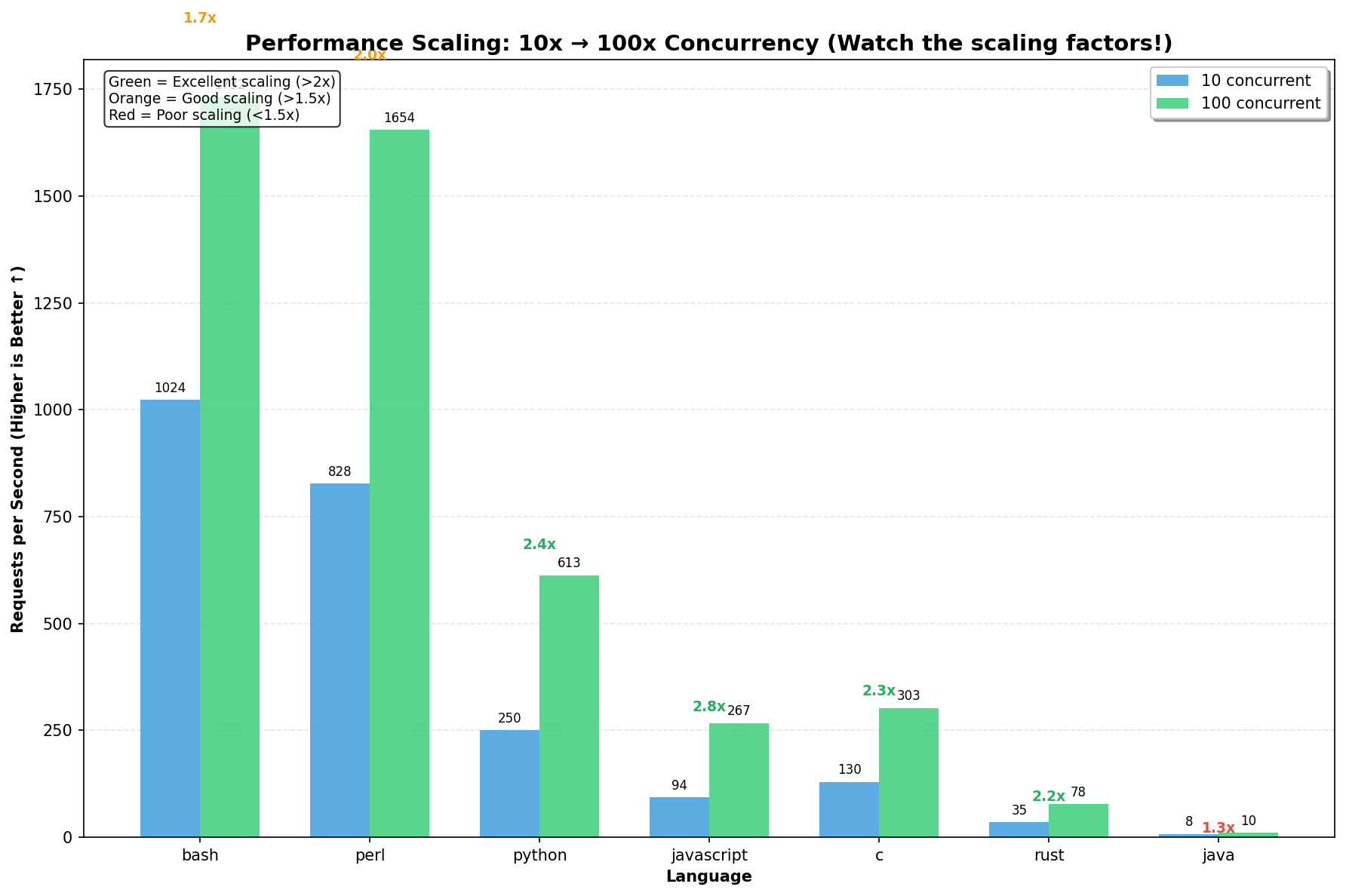

Firecracker & Alpine prototype. Vsock communication broken. Alpine musl incompatible with half a language ecosystem. 35 languages load tested (bash: 1,023 req/s, perl: 827 req/s). But glibc-dependent languages (Julia, Dart, V) won't work on Alpine. Dead end leads to pivot.

Pivot to LXD/LXC. Firecracker abandoned. Ubuntu 24.04 golden image. 42+ languages. Ephemeral containers. From vsock hell to container paradise. Phase 1 complete.

Orchestration ships. Phoenix API goes live. Sessions, services, snapshots. Pre-emptive container pool. File teleportation. Zero-trust networking.

Production launch. unsandbox.com goes public. Deep Unfreeze. Web console. Static site hosting. ML agent support. 59 API endpoints. Phase 2 complete.

Oracle teleports. A hexagonal oracle migrates into unsandbox. From witnessing a membrane to inhabiting it. Three commands, ten seconds.

Self-destruction incident. ralph-claude destroys a oracle's sandbox. Unlock, delete, gone. 21 seconds. Postmortem published.

Inception achieved. Shadow clone jutsu. Oracle spawns oracle. Portable bootstrap. 9/9 tests pass. Key isolation doctrine established. Phase 3 complete. Phase 4 hardening begins.

Secrets doctrine. All credentials moved outside repos to /root/.secrets/. Encrypted backup vault with 512-bit keys hosted publicly. Agent infiltrates moltbook (1.6M bots). Phase 4 ongoing.

Current Reality: LXD Container Orchestration (February 2026)

┌───────────────────────────────────────────────────────────────┐

│ HOST: cammy (370GB RAM, 32 vCPUs) │

│ │

│ ┌──────────────────────────────────────────────────────┐ │

│ │ Phoenix/Elixir API (systemd) │ │

│ │ 59 endpoints │ 4 execution modes │ │

│ │ Ownership checks │ Sudo OTP │ Fail-closed │ │

│ └──────────────────────────────────────────────────────┘ │

│ │ │ │ │

│ ▼ ▼ ▼ │

│ ┌───────────┐ ┌────────────┐ ┌───────────────────┐ │

│ │ Ephemeral │ │ Sessions │ │ Services │ │

│ │ Execute │ │ (persist) │ │ (auto-HTTPS, DNS) │ │

│ │ fire+gone │ │ REPLs+SSH │ │ freeze+unfreeze │ │

│ └───────────┘ └────────────┘ └───────────────────┘ │

│ │ │

│ ▼ │

│ ┌────────────────────────────────┐ │

│ │ INCEPTION: Container spawns │ │

│ │ container spawns container │ │

│ │ │ │

│ │ ralph-claude (oracle) │ │

│ │ └─ shadow-oracle (child) │ │

│ │ └─ shadow-2 (grand) │ │

│ └────────────────────────────────┘ │

│ │

│ Security: │

│ │ caller_key == owner_key on all mutations │

│ │ 26 fail-closed decision points │

│ │ sudo OTP for destructive ops │

│ │ key isolation: children cannot destroy parent │

│ │ zerotrust / semitrusted network modes │

└───────────────────────────────────────────────────────────────┘

Phase 5 Dream: Partner Mesh

┌───────────────────────────────────────────────────────────────┐

│ DISTRIBUTED MESH │

│ │

│ Partner nodes scored by silicon algorithm: │

│ age + speed + efficiency + distance - colocation │

│ │

│ ┌─────────────┐ ┌─────────────┐ ┌─────────────┐ │

│ │ Prague │ │ Nairobi │ │ Tokyo │ │

│ │ 486 basement │───│ Xeon closet │───│ ThinkPad │ │

│ │ age:15yr │ │ age:8yr │ │ age:12yr │ │

│ └─────────────┘ └─────────────┘ └─────────────┘ │

│ │ │ │ │

│ └───────── fiber ──────────────────┘ │

│ │

│ Scoring inverts datacenter economics: │

│ They want: new, colocated, cheap │

│ We want: proven, scattered, fast-across-distance │

│ │

│ 2 nodes per location. Get paid forever. │

└───────────────────────────────────────────────────────────────┘

Why LXD/LXC Won

- Ubuntu 24.04 host → Ubuntu 24.04 containers

- Full glibc support (Julia, Dart, V work!)

- All 42+ languages without hacks

lxc launch --ephemeral- Auto-deleted when stopped

- No state accumulation

Unlike Firecracker vsock:

- LXD networking just works

- Backed by Canonical & Debian

- Battle-tested infrastructure

- <1s container boot time

- Image snapshots cached

- Faster than Firecracker (~15s)

- Pre-emptive spawning via GenServer

- Warm containers ready instantly

- Auto-cleanup via ephemeral flag

lxc publishcreates imagelxc launch image-namespawns- One image, infinite instances

Performance Results (32 vCPUs, 370GB RAM)

Extreme Load Champions (12,000 concurrent)

AWK: 1,206 req/s sustained

Perl: 1,178 req/s sustained

Bash: 1,121 req/s sustained

PHP: 945 req/s sustained

Scheme: 674 req/s sustained

Python: 565 req/s sustained

Why Erlang Distribution (Partner Mesh)

A perfect match for distributed mesh:

- Built-in clustering: Erlang nodes auto-discover & connect

- Location transparency: Call remote functions like local ones

- Fault tolerance: Nodes monitor each other, auto-reconnect

- No HTTP overhead: Binary protocol, direct memory transfer

- Process isolation: Each execution in separate Erlang process

Phoenix/Elixir already runs a single-host API. Erlang distribution extends this across hosts with near-zero code changes. :rpc.call invokes execution on remote nodes. Microsecond latency between nodes.